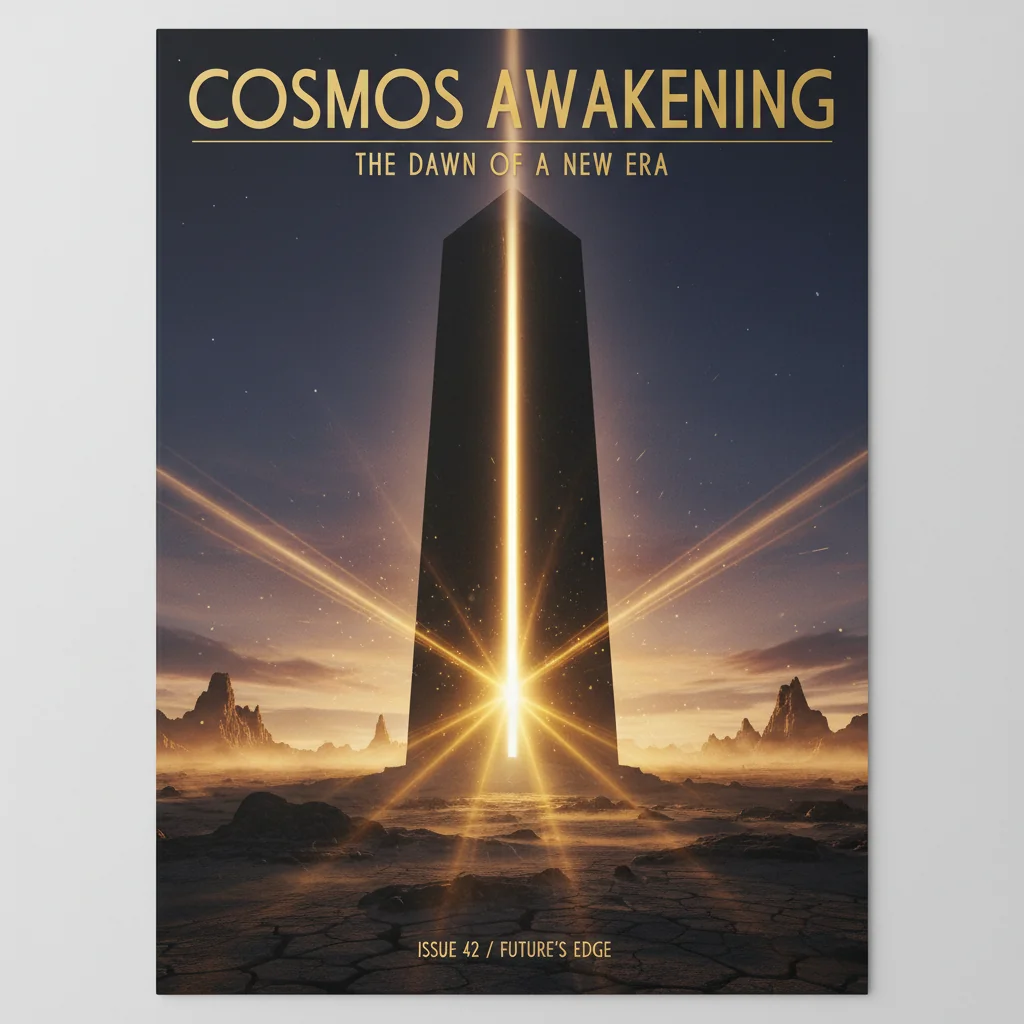

The 2026 AI Video Revolution: Beyond Text-to-Video

We are moving beyond simple text-to-video. In 2026, generative cinema is about architectural control and multimodal orchestration.

Runway

Runway Luma Dream Machine

Luma Dream MachineThe Revolution is Multimodal.

In 2024, we was thrilled by 5-second clips of parrots in sunglasses. In 2026, we are orchestrating full-length sequences with spatial consistency, temporal control, and emotional depth. The revolution isn't coming; it's being rendered.

Beyond the Prompt

The amateur prompts; the architect orchestrates. The 2026 workflow is defined by:

- Spatial Anchors: Ensuring that a character in Scene A exists in the same physical space in Scene Z. Tools like Runway Gen-3 and Luma Dream Machine are now spatial engines, not just pixel generators.

- Multimodal Feedback: Using a combination of sketches, reference images, and audio cues to guide the AI's "Creative Director" sub-routines.

- Temporal Invariance: Eliminating the shimmer and flicker that once plagued the 'dream-like' early days of AI video.

The Business of Cinema

For production agencies, this shift means ROI. A production that once took 3 months and $200k can now be prototyped in a week and finalized in three. This doesn't replace the crew; it empowers the visionary.

Summary

At AIVideos.eu, we believe the next great film won't be made in Hollywood. It will be made in a terminal, by a director who knows how to link human intent with machine intelligence.

Stay obsidian.

Generate cinematic AI video — from €19

Five frontier models. No subscription. Buy credits, generate on demand, own the results outright.